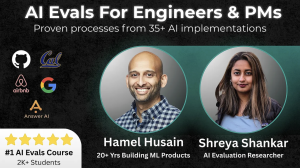

AI Evals For Engineers & PMs – No.1 Course at MavenDownload Link:

(No Ads, No Waiting Time, No Capcha)

Course Info:

- Sales Page

- Download Size: 7.98GB

![]() Download link is available for members only. Click here to join us.

Download link is available for members only. Click here to join us.

What you can Learn from AI Evals For Engineers & PMs – No.1 Course at Maven?

Learn proven approaches for quickly improving AI applications. Build AI that works better than the competition, regardless of the use-case.

This course will provide you with hands-on experience. Get ready to sweat through exercises, code and data! We will meet two times a week for four weeks, with generous office hours (read below for course schedule).

We will also hold office hours and host Discord community where you can communicate with us and each other. In return, you will be rewarded with skills that will set you apart from the competition by a wide margin. (see testimonials below). All sessions will be recorded and available to students asynchronously.

Course Content

Lesson 1: Fundamentals & Lifecycle LLM Application Evaluation

- Why evaluation matters for LLM applications – business impact and risk mitigation

- Challenges unique to evaluating LLM outputs – common failure modes and context-dependence

- The lifecycle approach from development to production

- Basic instrumentation and observability for tracking system behavior

- Introduction to error analysis and methods for categorizing failures

Lesson 2: Systematic Error Analysis

- Bootstrap data through effective synthetic data generation

- Annotation strategies and quantitative analysis of qualitative data

- Translating error findings into actionable improvements

- Avoiding common pitfalls in the analysis process

- Practical exercise: Building and iterating on an error tracking system

Lesson 3: Implementing Effective Evaluations

- Defining metrics using code-based and LLM-judge approaches

- Techniques for evaluating individual outputs and overall system performance

- Organizing datasets with proper structure for inputs and reference data

- Practical exercise: Building an automated evaluation pipeline

Lesson 4: Collaborative Evaluation Practices

- Designing efficient team-based evaluation workflows

- Statistical methods for measuring inter-annotator agreement

- Techniques for building consensus on evaluation criteria

- Practical exercise: Collaborative alignment in breakout groups

Lesson 5: Architecture-Specific Evaluation Strategies

- Evaluating RAG systems for retrieval relevance and factual accuracy

- Testing multi-step pipelines to identify error propagation

- Assessing appropriate tool use and multi-turn conversation quality

- Multi-modal evaluation for text, image, and audio interactions

- Practical exercise: Creating targeted test suites for different architectures

Lesson 6: Production Monitoring & Continuous Evaluation

- Implementing traces, spans, and session tracking for observability

- Setting up automated evaluation gates in CI/CD pipelines

- Methods for consistent comparison across experiments

- Implementing safety and quality control guardrails

- Practical exercise: Designing an effective monitoring dashboard

Lesson 7: Efficient Continuous Human Review Systems

- Strategic sampling approaches for maximizing review impact

- Optimizing interface design for reviewer productivity

- Practical exercise: Implementing a continuous feedback collection system

Lesson 8: Cost Optimization

- Quantifying value versus expenditure in LLM applications

- Intelligent model routing based on query complexity

- Practical exercise: Optimizing a real-world application for cost efficiency